News

Our first data-centric LLM competition begins! Please visit the competition's official websites, FT-Data Ranker (1B Track, 7B Track), for more information.

Introduction

This is a reference LLM from Data-Juicer.

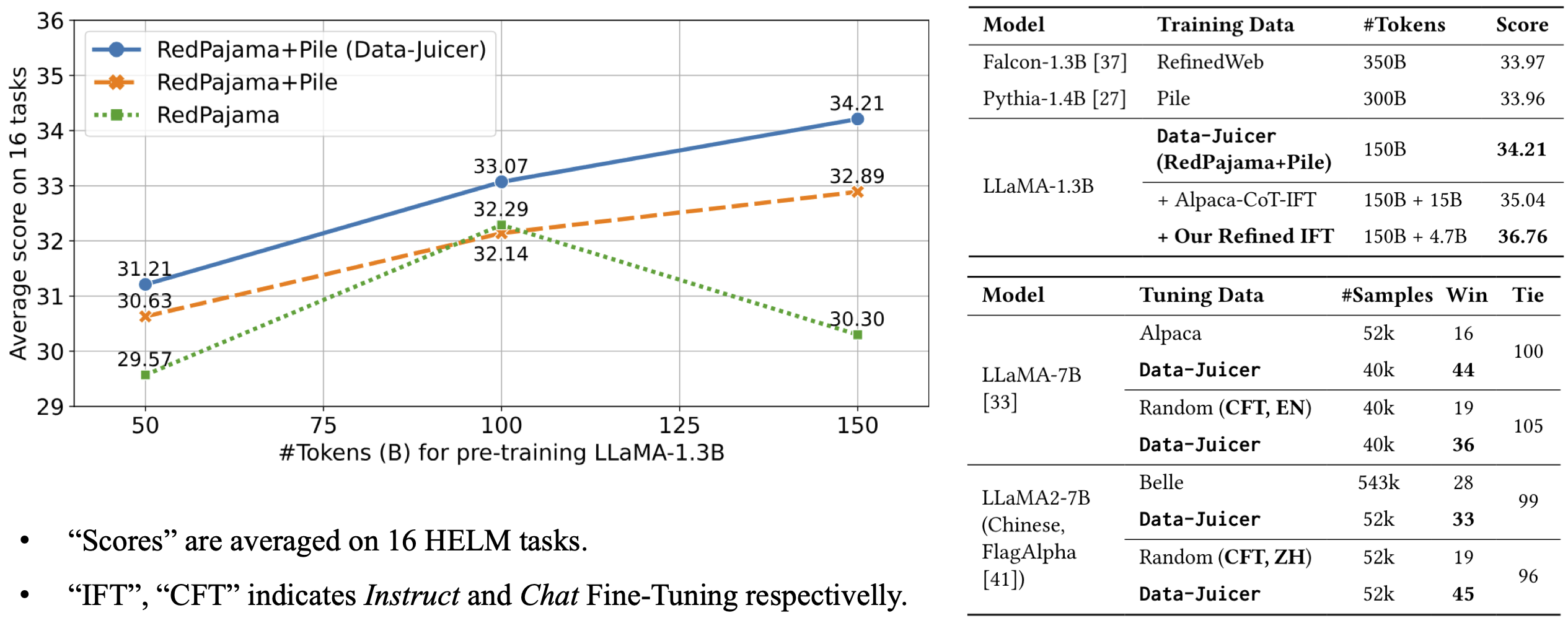

The model architecture is LLaMA-1.3B and we adopt the OpenLLaMA implementation. The model is pre-trained on 150B tokens of Data-Juicer's refined RedPajama and Pile, and 4.7B tokens of Data-Juicer refined instruct data. It achieves an average score of 36.76 over 16 HELM tasks, improved the OpenLLaMA-DJ-150B by 2.55 point.

For more details, please refer to our paper.